Driving Visual Environment Detection

D.V.E.D.

High-Resolution driving environment extraction and analytics in a large scale

ABOUT THE SYSTEM

Key features of our system

High-Resolution Analysis

High Robustness

High Accuracy

Large-Scale Image Analytics

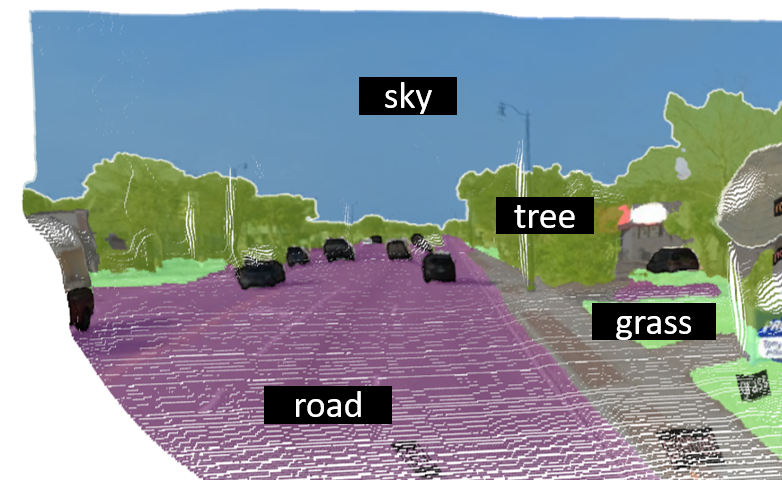

Semantic Segmentation

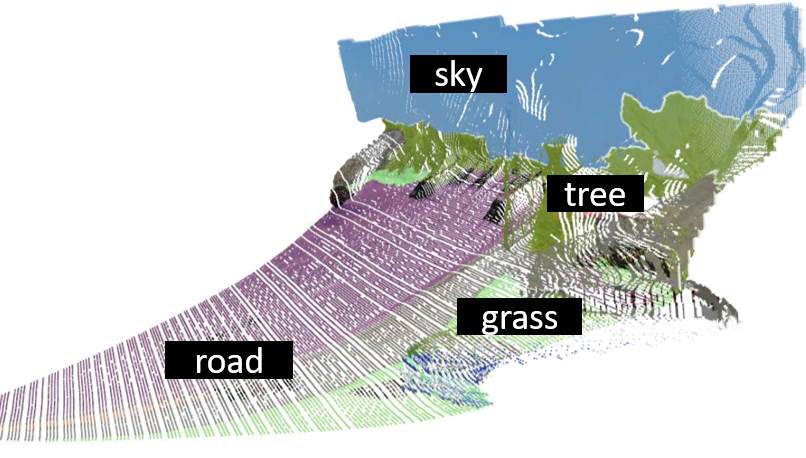

Depth Estimation

3D Driving Environment Estimation

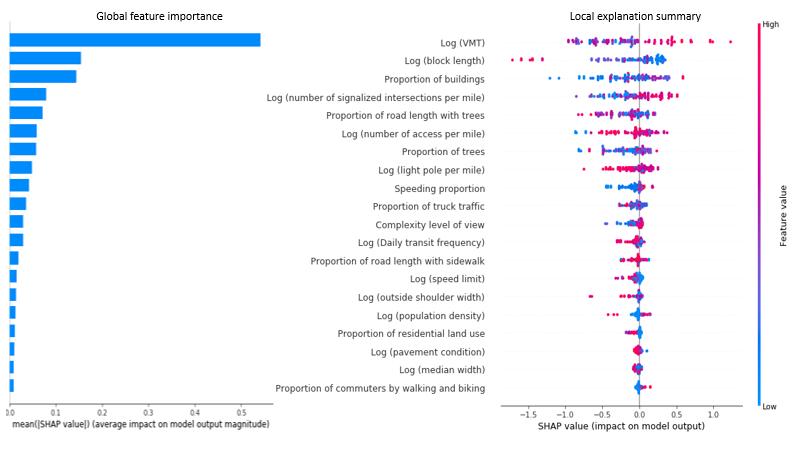

Fusion of Deep Learning Detection and Explainable Machine Learning

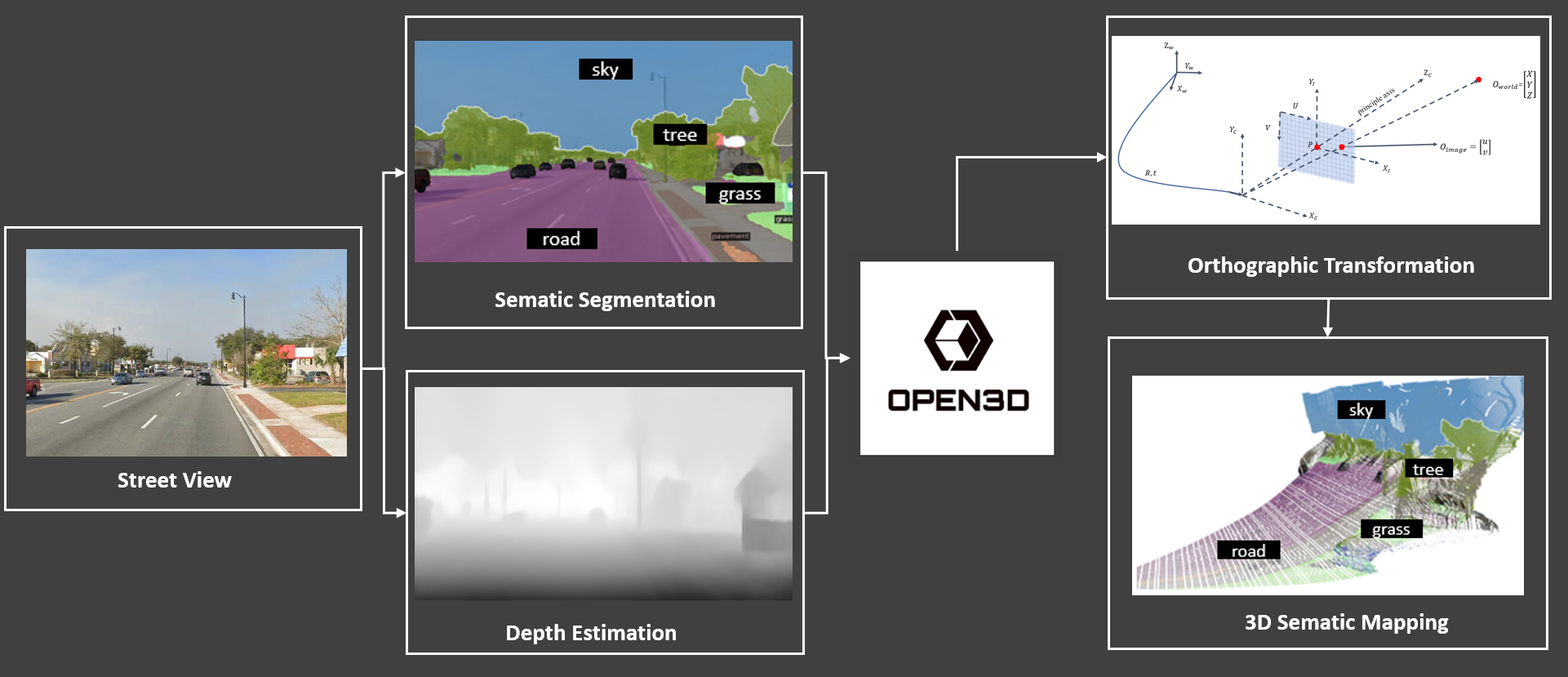

System Overview

This system extracts the driving visual environment along roads by using street view images (e.g., Google Street View Panorama) and videos from cameras on vehicles. First, semantic segmentation and depth estimation are conducted to get the clustering and depth information at each pixel in images or videos. Then, the orthographic transformation is applied to transfer the 2D images into 3D images, which reflects the real world driving view. Based on the proposed system, the following information could be generated from street view images and videos:

- Surrounding environments such as trees, buildings, signs, and driving complexity levels

- Safety blind spots for human-driving and automated vehicles

- Urban feature composition and urban greenery

- Walking environment for pedestrians and cycling environment for bicyclists

The system can be applied to images and videos at the street-level collected at different types of road facilities, such as freeways, arterials, intersections, bike lanes, and sidewalks.

Our Performance

OUR Example

What we’ve done for safety